Unlocking AI PC: 3 Key Roles of SSDs and DRAM in Edge Computing

For years, Artificial Intelligence was something that happened “out there”—in massive, remote cloud servers. When you asked a chatbot a question or generated an image, your computer simply sent the request over the internet and waited for the cloud to do the heavy lifting, But a massive shift is underway: the rise of the AI PC and Edge Computing.

Table of Contents

ToggleTech giants are now pushing AI processing directly to your local device (the “edge” of the network). Running AI locally means zero latency, absolute data privacy, and the ability to work offline. However, processing massive Large Language Models (LLMs) and generative AI applications on your own desk requires serious hardware power. While CPUs and NPUs (Neural Processing Units) get all the marketing hype, the true unsung heroes of the AI PC revolution are your memory and storage .

Here are the three critical roles SSDs and DRAM play in the new era of edge computing.

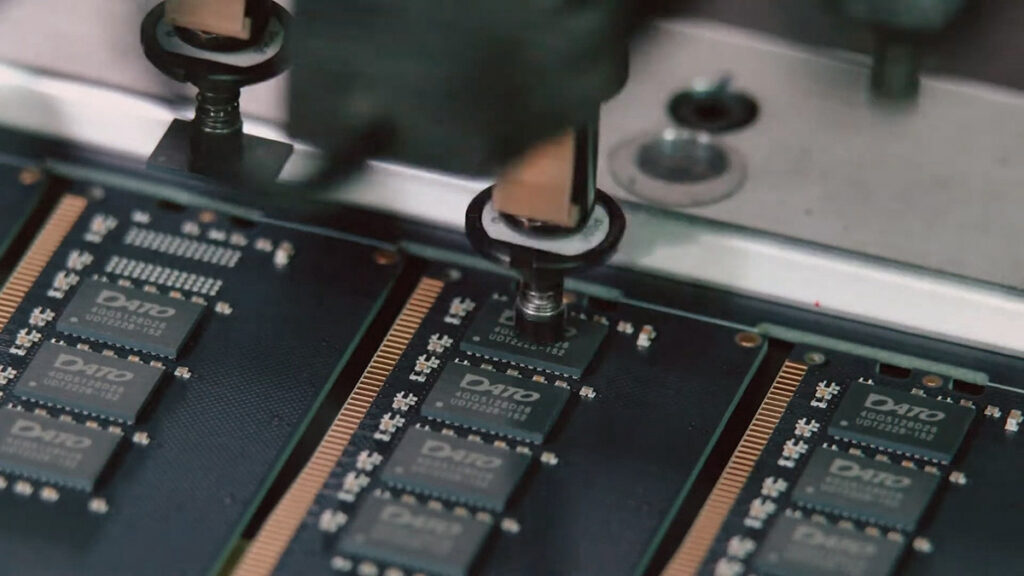

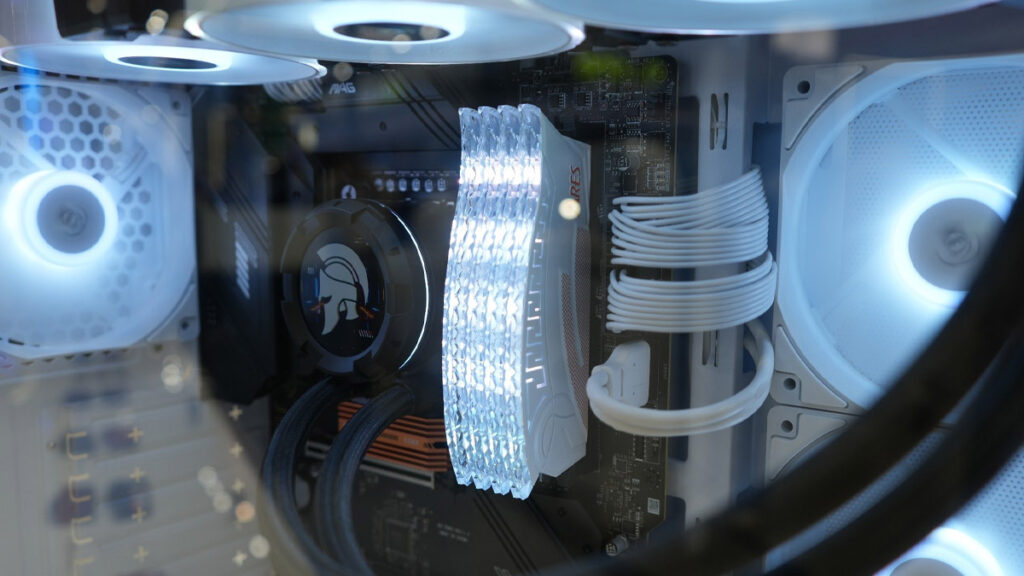

1. DRAM: The "Active Workspace" for Local AI Models

When you run an AI model locally, the entire model must be loaded into your system’s active memory to function efficiently. In edge computing, your DRAM dictates whether an AI application runs seamlessly or crashes immediately.

Capacity is the New Bottleneck

AI doesn’t just need a lot of memory; it needs fast memory. High-frequency DDR5 memory (e.g., 6000MT/s and above) provides the massive bandwidth required to feed data to the CPU and NPU without stuttering, ensuring that your local AI assistant responds to your prompts in real-time.

Bandwidth Dictates Response Time

A standard 7-billion-parameter LLM (like Meta’s Llama or Mistral) can easily consume 4GB to 8GB of memory just to sit idle. Once you start generating text or processing data, that footprint expands. In the AI PC era, 16GB of RAM is no longer enough for power users; 32GB of DDR5 RAM is the new baseline, and 64GB is recommended for creators running complex AI workflows.

2. NVMe SSDs: The High-Speed Vault for Massive Datasets

AI models are inherently massive. High-resolution image generators, localized knowledge databases, and intricate voice models can take up dozens or even hundreds of gigabytes of storage space. Your SSD is responsible for securely housing this data and serving it up instantaneously.

Eliminating Load-Time Friction

Waiting for a 20GB AI model to load off a slow hard drive or a SATA SSD defeats the purpose of “instant” edge computing. High-performance PCIe Gen4 and Gen5 NVMe SSDs (offering speeds from 7,000 MB/s to over 14,000 MB/s) allow these massive models to load into the DRAM in a matter of seconds.

Sustained Performance & Thermal Stability

AI workloads often require continuous, intensive reading and writing. This sustained workload can cause drives to overheat and throttle their speeds. High-end Gen5 SSDs equipped with active cooling solutions (like micro-fans and skived heatsinks) are becoming essential to maintain peak performance during heavy AI processing.

3. The Rise of Smart, AI-Driven Portable Storage

Edge computing isn’t just limited to the components permanently installed inside your motherboard. The AI PC era is extending to how we transport and interact with our data on the go, giving rise to AI Portable SSDs.

Edge Computing on the Move

Next-generation portable storage is evolving beyond simple flash drives. Integrated with dedicated smartphone and PC applications, AI Portable SSDs can now perform edge computing tasks directly from the drive itself.

Intelligent Data Management

Imagine an external drive that uses local AI to automatically categorize thousands of photos, recognize faces, or transcribe audio files without ever connecting to a cloud server. Furthermore, these smart drives utilize companion apps to continuously monitor the SSD’s SMART health data in real-time, using predictive algorithms to warn users of potential file conflicts or hardware degradation before any data is lost.

The Bottom Line for Consumers

The transition to the AI PC era means that treating storage and memory as an afterthought is no longer an option. If you are building, buying, or upgrading a PC to take advantage of local AI features like Microsoft Copilot+, local LLMs, or AI-powered creative software, your storage ecosystem is critical.

Investing in high-capacity DDR5 DRAM, a lightning-fast PCIe Gen4/Gen5 SSD, and intelligent portable storage will ensure your system isn’t just “AI-Ready” in name, but truly capable of handling the heavy demands of edge computing.

最近の投稿

- Unlocking AI PC: 3 Key Roles of SSDs and DRAM in Edge Computing

- Gamers vs. Creators: The Ultimate Guide to Choosing SSD and RAM Specs

- The Future of Edge-Assisted Storage: How the ARES QTARS AI Portable SSD is Revolutionizing Data Management

- Redefining Portable Storage: Datotek Unveils the Award-Winning ARES Q4+ Magnetic SSD

- The Ultimate PCIe Gen5 SSD Cooling Solution: ARES AEROFIN 1cm Active Cooler for PC & PS5